Case Studies

How have researchers used DataShop in the past? What were their experiences like and what did they gain? Below is a collection of "case studies" that describe some research goals and how DataShop was used to approach them.

Using DataShop to discover a better knowledge component model of student learning

Ken Koedinger

This case study illustrates the use of DataShop to perform exploratory analysis of DataShop data, generate a theory for optimizing a cognitive model, and test that theory both visually and statistically within DataShop. It also illustrates, more broadly, how educational technology data can be used to gain insights into student thinking and learning.

Future case studies

Improving the KC model for the Andes self-explanation study

Bob Hausmann

University of Pittsburgh learning sciences researcher Bob Hausmann uses DataShop to perform an exploratory analysis on tutor data collected with the Andes physics tutor. He uses learning curves to find an unusually difficult section of the tutor, and experiments with a different way of conceptualizing a knowledge component involved in problem-solving. He creates this alternative knowledge component model in Excel and imports it into DataShop, where he compares learning curves between the original and revised models. Bob also discusses the general workflow for getting tutor data from the Andes tutor to DataShop, and how he performs QA on these data.

Exploratory data checking and analysis before export for statistical analysis

Kirsten Butcher

Learning sciences researcher Kirsten Butcher uses DataShop to perform sanity checks on log data she collected in a study exploring how to support students in integrating visual and verbal information during learning. She prunes data by removing data associated with irrelevant knowledge components, and discovers differences between conditions in a time-based learning curve analysis. She then exports her data for statistical analysis.

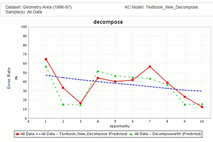

Identifying under- and over-practiced knowledge components

Ken Koedinger, Hao Cen

Carnegie Mellon Professor Ken Koedinger and post-doctoral researcher Hao Cen use DataShop learning curves to identify over-practiced and under-practiced knowledge components in the Cognitive Tutor Geometry (1996) dataset. With this knowledge, they analyzed and optimized the Cognitive Tutor's knowledge-tracing parameters for that unit to improve learning efficiency. The results, when analyzed in DataShop, show that the new knowledge-tracing parameters presented smoother learning curves with fewer opportunities (less time): the new tutor gave more opportunities to practice difficult knowledge components and fewer opportunities to practice easy ones. Data was exported to establish that students using the new tutor spent less instructional time than those using the old version. (The student-problem aggregate now makes that easier.) Separate analysis of paper pre- and post-test data demonstrated that despite spending less time on the unit, students using the new tutor demonstrated equivalent robust learning gains.